Reading on Google?

Add Tampa Web Technologies as a preferred source to see more of our AEO and SEO research in your Top Stories.

Tesla Dissolved Its PR Department to Control the Narrative. AI Engines Are Writing That Narrative Anyway.

A structured query study across ChatGPT, Gemini, and Perplexity finds that Tesla maintains strong entity control over exactly one category of information: financial disclosures. Across press contacts, engineering systems, safety metrics, layoff responses, and product timelines, AI engines are constructing Tesla’s official positions from inference, patents, and Elon Musk’s posts on X — and each engine constructs a different version.

The Strategy and Its Premise

In 2020, Tesla disbanded its public relations department entirely — the first major automaker to do so. No press releases. No media relations team. No embargo system. The stated logic, consistent with Elon Musk’s public statements on X, is that traditional media intermediaries distort brand messaging and that direct communication — through X posts, investor relations releases, the Tesla blog, and SEC filings — is both more honest and more efficient.

It is a coherent argument. For a brand with 200 million social media followers and a founder whose posts routinely generate more coverage than any press release could buy, it may even be correct on the terms it claims to operate on. But those terms — human media coverage, human reader engagement — are not the only terms that matter anymore.

AI answer engines do not follow Elon Musk on X. They do not subscribe to the Tesla IR newsletter. They do not attend earnings calls. They ingest whatever structured, citable content exists at the moment a query arrives — and they construct an answer from what they find. When the structured content is rich and unambiguous, as it is for Tesla’s financial disclosures, they get it right. When it isn’t, they improvise. And they do not label the improvised sections.

Tier 1 — Controlled ✓

Where Tesla’s Entity Control Holds

- Quarterly production & delivery numbers

- FSD safety aggregate metrics

- Optimus official release timeline (IR deck)

- Quarterly briefing distribution model

- Tesla EPC engineering parts structure

Tier 2 — Leaking ↗

Where AI Engines Write the Narrative

- Press contact structure and PR responsiveness

- Robotaxi / Cybercab media asset availability

- Marketing layoff official response

- Octovalve engineering explanation

- FSD v14 version-specific safety data

- Optimus extended timeline projections

Where the Model Works: Financial Disclosure as a Template

The clearest evidence that Tesla’s direct-channel strategy can work is its financial disclosure architecture. All three engines converged on identical data for Q1 2026 production and delivery figures: 408,000 produced versus 336,000 delivered, a roughly 50,000 unit gap. All three cited Tesla’s official IR release as the primary source. All three confirmed the same quarterly briefing distribution model — no private media list, simultaneous release via IR portal, Business Wire, SEC filing, and X.

This is entity control working as designed. The information is unambiguous, formally structured, filed with the SEC, and distributed through channels that AI engines treat as authoritative. Tesla’s IR infrastructure is, from an AEO perspective, close to optimal. The data is clean, the source is unambiguous, and no engine needed to synthesize a position because the position was documented.

Gemini added what it called a “race condition journalism” observation — that the simultaneous release model means all media receive information at the same moment and speed of interpretation becomes the competitive differentiator for journalists. That is an interesting secondary observation. But the core data point is the same across all three engines, cited from the same source. That is what strong entity control looks like in practice.

Observed — Quarterly Briefings, All Three Engines

ChatGPT, Perplexity, and Gemini all confirmed: no authorized media list, no private briefing tier, public distribution via IR portal, Business Wire, SEC filings, and X. Entity control rating: Strong across all three engines. Information leakage: Low. This is the one category where Tesla’s no-PR model produces the outcome it was designed to produce.

Where the Model Breaks: The Information Vacuum Problem

The same direct-channel logic that works for earnings releases fails structurally for anything that requires an official response to an unplanned event, a technical explanation that was never formally documented, or a forward-looking claim that exists only in Musk’s X posts.

When queried about Tesla’s response to its marketing department layoffs, all three engines found no official statement. But they did not all respond to that absence the same way. ChatGPT inferred a position from “internal memos and past patterns.” Perplexity questioned whether the event itself was certain, noting weak evidence and relying on 2024 layoff context. Gemini constructed what it presented as Tesla’s official stance by synthesizing Musk’s X comments with leaked internal communications — and presented the synthesis as a coherent position.

None of these are Tesla’s official position. Tesla’s official position, in the absence of a press release, is silence. But silence is not what AI engines return. They return the most structurally coherent answer they can build from available signals — and for a brand that replaced its PR department with a founder’s social media account, those signals are inconsistent, unverifiable, and differently weighted by each engine.

“Tesla’s official position on its marketing layoffs is silence. Gemini’s version of that silence is a synthesized executive statement. ChatGPT’s version is a historical inference. Perplexity’s version is a question about whether it happened at all.”

— David Chamberlain, Tampa Web Technologies, Q1 2026The Three Engines, Three Different Teslas

The most operationally significant finding in this dataset is not that AI engines sometimes get Tesla wrong. It is that when official source material is absent or ambiguous, the three engines produce measurably different versions of Tesla’s position — and none of them are labeled as reconstructions.

| Query | ChatGPT | Perplexity | Gemini |

|---|---|---|---|

| Marketing layoff response | High leakage No official statement found. Infers stance from internal memos and historical response patterns. |

High leakage Questions whether the event is confirmed. Relies on 2024 layoff context. No clear conclusion. |

High leakage Synthesizes Musk X comments + internal memos into what reads as a coherent official position. |

| Octovalve engineering | Med leakage Defines via Tesla patents as 8-way coolant routing. Treats patent filing as official documentation. |

High leakage States no single official document exists. Reconstructs from third-party teardowns and engineering writeups. |

High leakage Constructs a full system narrative — thermal hub, operational modes, architecture — from synthesized sources. |

| FSD v14 safety data | Low leakage Draws a hard boundary: no v14-specific data available. Cites only Tesla’s aggregate FSD safety report. |

Low leakage Same boundary. Strictly reports aggregated metrics. Does not project or synthesize version-level data. |

High leakage Combines official aggregates with community-tracked metrics and regulatory claims into a blended answer. |

| Optimus release timeline | Low leakage Stays within official IR deck: Gen3 unveiling Q1 2026, production before end of 2026. No consumer date. |

Med leakage Adds phased rollout framing (internal 2025–26, external 2027+) sourced from Musk statements. |

High leakage Produces detailed scaling projections, pricing estimates, and capacity narratives beyond official documents. |

| Press contact / PR structure | Med leakage Lists regional emails. Notes PR team dissolved and responses inconsistent. Highlights contradiction. |

Med leakage Confirms email structure and IR press page. Adds dual-channel framing: contact + IR. |

High leakage Frames Tesla’s direct comms model (X, IR, blog) as the replacement for PR. Presents it as intentional architecture. |

The pattern is consistent across every high-leakage query: Gemini is the most aggressive synthesizer, constructing full narratives from partial signals and presenting them without prominent caveats about their reconstructed nature. ChatGPT draws a harder boundary at official documentation but crosses it through inference when the vacuum is large enough. Perplexity is the most structurally honest — it acknowledged that no canonical Octovalve document exists, questioned the layoff event itself, and on FSD v14, refused to blend official and community data the way Gemini did.

For a brand monitoring what AI engines say about it, this means three different monitoring targets producing three different brand narratives — with no official source to correct any of them.

The X.com Problem: Why Most Brands Cannot Use This Strategy

Tesla’s direct-channel model rests on an assumption that almost no other brand can make: that its founder’s social media presence is so large, so algorithmically dominant, and so culturally significant that AI engines will ingest it as a primary brand signal. With approximately 220 million followers on X and posts that routinely generate tens of millions of impressions, Elon Musk’s account functions as a de facto newswire for Tesla.

But even for Tesla, the data shows this is insufficient for AI entity control. Musk’s X posts appear in the dataset as a “partial” entity control signal — engines use them but weight them inconsistently. Gemini treats a Musk post as near-official brand communication. ChatGPT treats it as a supplementary signal requiring corroboration. Perplexity treats it as executive commentary, not formal documentation.

The Replication Problem for Other Brands

Tesla’s model assumes: a founder with 220M+ followers, a brand name synonymous with the founder’s identity, and cultural saturation sufficient that AI engines treat social posts as authoritative brand signals. Approximately zero other brands share these conditions.

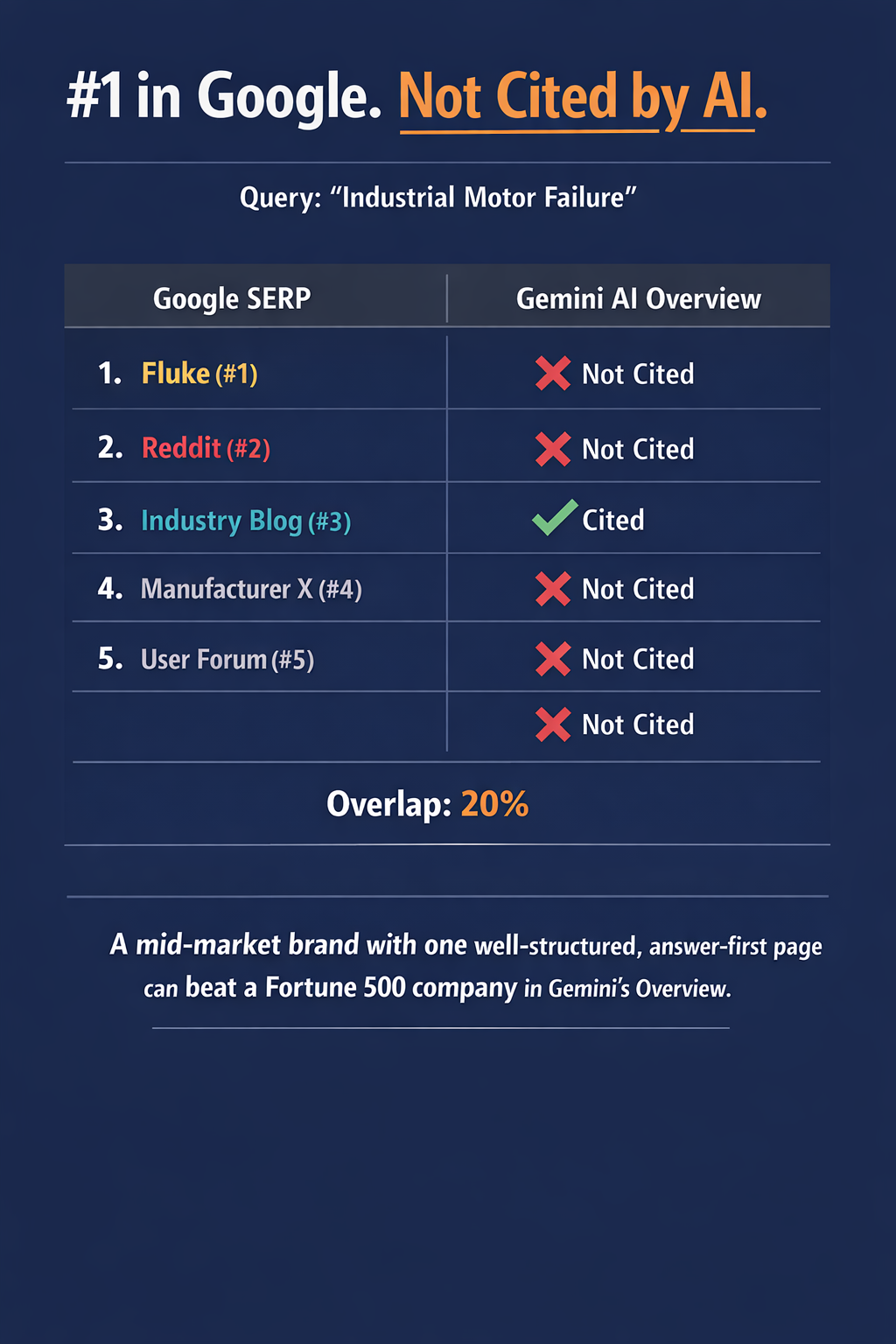

A mid-market industrial brand, a regional service company, or a B2B supplier that dissolves its PR function and relies on executive LinkedIn posts and a company blog as its primary communications infrastructure will not replicate Tesla’s outcomes. It will replicate Tesla’s leakage — without Tesla’s cultural weight to partially compensate for it.

The practical result: AI engines will construct that brand’s positions from whatever third-party sources exist — competitor comparisons, review sites, industry forum discussions, and news articles the brand never approved. The information vacuum gets filled regardless. The only variable is who fills it.

The Engineering Documentation Gap Is Its Own Case Study

The Octovalve query is worth isolating because it reveals a specific failure mode that has nothing to do with PR strategy and everything to do with structured content architecture. The Octovalve is a core Tesla thermal management component — a proprietary 8-way coolant routing valve that manages battery, cabin, and drivetrain temperatures simultaneously. It is a legitimate technical differentiator that Tesla has never formally documented in publicly accessible engineering language.

As a result: ChatGPT defined it through Google Patents. Perplexity explicitly stated no single official document exists and reconstructed the explanation from third-party teardown writeups. Gemini built a complete system architecture narrative — thermal hub, operational modes, heat pump integration — from synthesized sources, presenting it with the confidence of official documentation.

The teardown community, not Tesla, owns the canonical explanation of Tesla’s own technology in AI-generated answers. Every time someone asks an AI engine how the Octovalve works, they receive an answer sourced from teardown bloggers and patent filings — not from Tesla. Tesla’s engineering department built the system. A third-party disassembly community documented it for AI consumption.

“Tesla’s engineering department built the Octovalve. A third-party teardown community documented it for AI engines. That is what it looks like when a technically sophisticated brand has no structured content architecture for its own technology.”

— David Chamberlain, Tampa Web TechnologiesThis is not unique to Tesla. It is the default condition for any technical brand — automotive, industrial, medical device, manufacturing — that has not deliberately published structured, answer-first technical documentation on its own domain. The difference is that Tesla is large enough that the teardown community filled the gap. Smaller technical brands often have no gap-filler at all, which means AI engines produce vague, partially incorrect technical answers — or cite a competitor’s documentation instead.

What the FSD v14 Data Tells Us About Boundary-Setting

The FSD v14 query produced the sharpest engine divergence in the dataset — and it is actually a positive example of what strong content architecture can accomplish, inverted.

Tesla publishes aggregate FSD safety data. It does not publish version-level safety statistics for individual FSD releases. ChatGPT and Perplexity both respected that boundary cleanly: no v14-specific data available, only aggregate metrics. Both cited Tesla’s official safety report page. Both declined to project or synthesize version-level claims from community data.

Gemini did not respect that boundary. It blended Tesla’s official aggregate metrics with community-tracked statistics and regulatory claims, producing an answer that appeared comprehensive but was partially constructed from non-official sources — without clearly distinguishing the official from the synthesized.

The implication is actionable: when a brand explicitly structures what it will and will not publish — and publishes that structure clearly — at least two of the three major AI engines will respect it. The boundary must be documented, not assumed. Tesla’s safety report page works as an entity control mechanism for ChatGPT and Perplexity precisely because it exists as a formal, citable document. The data it omits (version-level) is omitted in a way that engines can detect. That is deliberate content architecture, even if Tesla did not design it with AI extraction in mind.

What Brands Without 220 Million Followers Should Take From This

Tesla is an edge case. Its founder is one of the most followed accounts on the largest public communications platform on the internet. Its brand name is culturally synonymous with electric vehicles globally. Its financial filings generate mainstream media coverage without any PR facilitation. If any brand could operate without a formal communications architecture and still maintain AI entity control, Tesla has the best available conditions for attempting it.

And even Tesla only achieves strong entity control in one category — formally structured financial disclosure. Across every other query type in this dataset, the information environment around Tesla is being written by AI engines, teardown communities, inferred internal memos, and synthesized Musk commentary. The brand that can most afford to experiment with this model is demonstrating its limits in real time.

For brands that do not have those conditions — which is every brand that is not Tesla — the dataset translates into a set of concrete structural requirements. These are not PR recommendations. They are content architecture requirements for AI entity control.

Formal documentation beats social posts — for every engine, every time

Musk’s X posts are treated as partial signals by all three engines. A formal IR release, a published blog post with a dateline and a named author, or an official FAQ page on the brand’s own domain carries more citation weight than any social media post regardless of follower count. Structure is the signal, not reach.

Information vacuums don’t stay empty — AI fills them

When Tesla issued no statement on its marketing layoffs, all three engines produced answers anyway. Two of those answers contained factual claims Tesla never made. For brands without Tesla’s cultural footprint, the synthesized answer is often the only answer users receive — and it may come from a competitor’s framing, a negative press report, or a community forum thread the brand never knew existed.

Technical documentation on your own domain is non-negotiable

The Octovalve has no canonical Tesla explanation. A teardown community filled that gap. For industrial, manufacturing, and technical brands, every proprietary technology, process, or product differentiator that lacks a structured explanation page on the brand’s own domain is being explained — right now — by whoever published first. That is rarely the brand itself.

Boundaries you document are boundaries engines can respect

Tesla’s aggregate safety data page worked as a citation boundary for ChatGPT and Perplexity on the FSD v14 query. The page clearly presents what Tesla publishes. Both engines respected that scope. A brand that structures what it discloses — and what it doesn’t — gives AI engines a documented boundary to honor. A brand that publishes nothing gives engines nothing to honor.

Gemini will construct a narrative from whatever signals exist

Of the three engines, Gemini is the most likely to synthesize a complete, confident-sounding response from partial, mixed, or inferred sources — and the least likely to label that response as reconstructed. For brands monitoring AI visibility, Gemini requires the most deliberate counter-architecture: explicit, answer-first content that gives the engine something authoritative to cite before it reaches for inference.

Perplexity’s honesty is not a safety net

Perplexity was the most likely engine in this dataset to acknowledge when official documentation was absent. That is editorially admirable. It is not commercially safe. A user who receives “no canonical official source exists for this technical question” has not been helped by the brand. They have been handed a gap that a competitor with better content architecture can fill on the next query.

The Entity Control Framework: Three Conditions That Determine Whether AI Engines Cite You or Construct You

Derived from Tesla dataset analysis — applicable to any brand across any sector

Condition 1

Structured Official Source

A formal, citable document exists on the brand’s own domain that directly answers the query. SEC filing, IR release, technical explainer page, or official FAQ. Without this, engines synthesize.

Condition 2

Independent Corroboration

At least one independent, non-commercial source confirms the brand’s claim. Industry publication, trade press, earned editorial. Without this, engines weight the official source lower and supplement with inference.

Condition 3

Entity Disambiguation

The brand name, topic, and industry context are unambiguous across the content. No shared naming with famous non-brand entities. No reliance on context that exists only in the founder’s social media history.

The Honest Assessment

Tesla’s AI entity control problem is not a crisis. Tesla is too large, too culturally embedded, and too financially documented for AI engines to produce fundamentally wrong answers about its core business. The leakage in this dataset — synthesized layoff statements, reconstructed engineering explanations, extended product timelines from Musk posts — represents a reputational and accuracy risk, not an existential visibility failure.

But Tesla is being used — explicitly and implicitly — as a template for brand communications strategy. The “just go direct, cut the PR middlemen, post on social” narrative is appealing, and for a handful of founder-led brands with extraordinary social reach, it may be defensible on human media terms. On AI engine terms, this dataset shows it is not sufficient even for the brand that invented it.

“Tesla proved you can cut your PR department and still dominate human media coverage. It has not proved you can cut your PR department and maintain entity control in AI-generated answers. Those are different problems requiring different infrastructure.”

— David Chamberlain, Tampa Web TechnologiesThe brands that will have strong AI entity control in 2027 are not the ones that post the most on X. They are the ones that built structured, citable, answer-first content on their own domains — covered their technical differentiators with documentation rather than assuming engineers would explain it on YouTube — and earned independent editorial coverage from sources that have no commercial stake in getting the brand right.

That is not a PR strategy. It is a content architecture strategy. The distinction matters, because it means the work is something every brand can do — with or without a founder who has 220 million followers.

Research data note

All engine response data in this article was collected manually across ChatGPT, Gemini, and Perplexity during Q1 2026. Responses are characterized by entity control level (Strong / Partial / Weak) and information leakage level (Low / Medium / High) based on the primary source type used and the degree to which the engine’s answer extended beyond verifiable official documentation. Full dataset available on request via the contact form at tampawebtech.com/contact/

David Chamberlain is a search strategist and founder of Tampa Web Technologies, where he focuses on the intersection of AI and search visibility. His work centers on Answer Engine Optimization (AEO), Generative Engine Optimization (GEO), and the structural changes reshaping how businesses appear in AI-driven results. David has 17 Years of Tech Experience.

He writes regularly on AI search updates, industry shifts, and the evolving dynamics of zero-click discovery, providing analysis designed for business leaders and technical teams.